Seed Control in Practice: What Reproduces and What Does Not

Same seed, same prompt, same output? Not always. What actually reproduces and what you need to lock alongside.

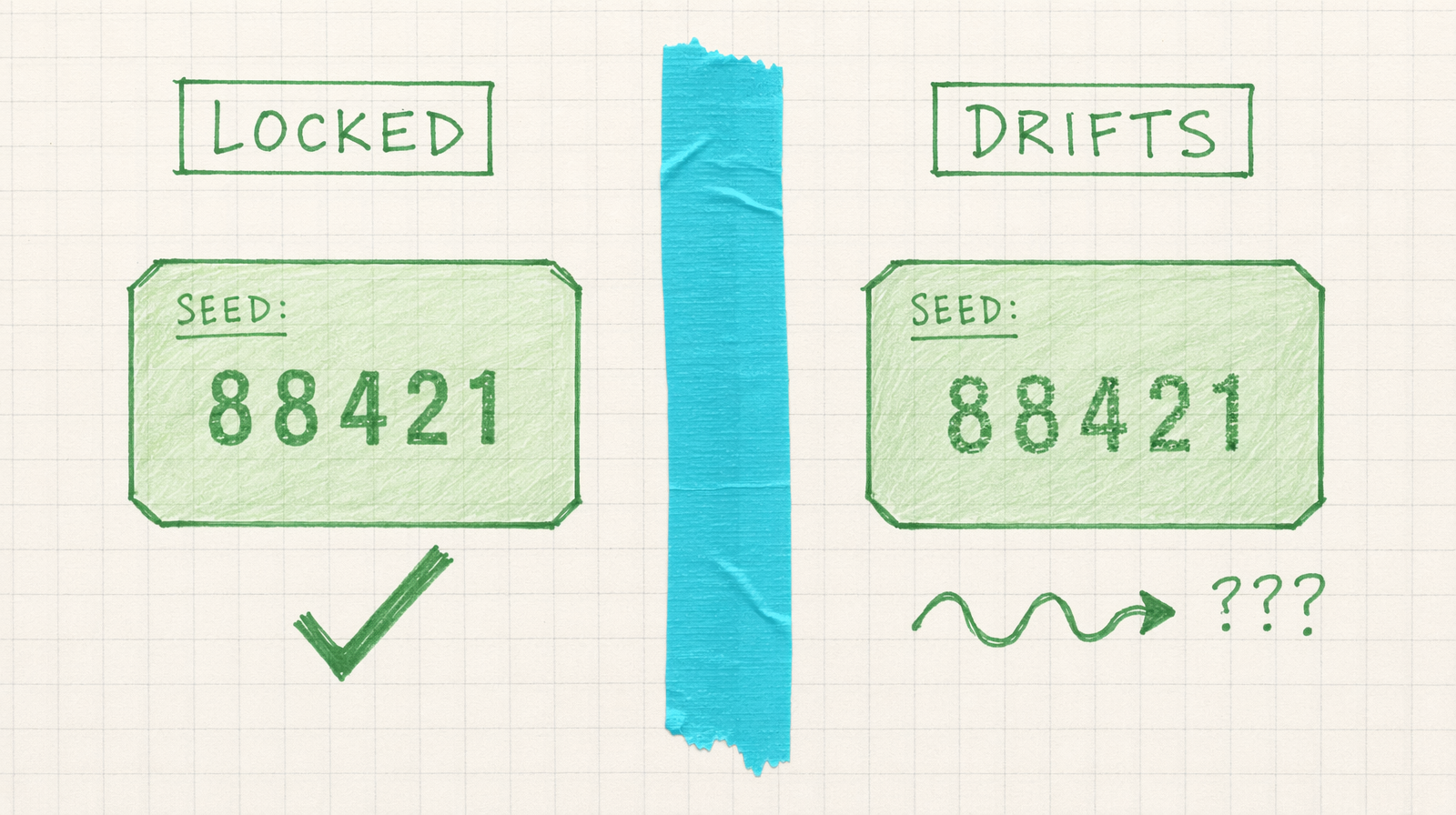

Seeds are the most misunderstood knob in text to video. People assume a locked seed guarantees a locked output. It does not. It locks one part of the randomness, and everything else in your stack is free to move.

Here is what actually reproduces when you pass the same seed to fal-ai/wan/v2.7/text-to-video: the initial noise tensor the model starts from. That is it. Same seed, same starting noise. Useful, but not the whole story.

What you also need to lock, in order of impact: the prompt string (every character, including whitespace), the enable_prompt_expansion flag, the model version endpoint, the resolution tier, the duration, the aspect ratio, and the negative prompt. Miss any of those and you get a different clip.

The sneakiest one is enable_prompt_expansion. Wan 2.7 rewrites your prompt by default before sampling. The rewrite itself uses an LLM that is not seed pinned the same way the video sampler is. If you want true reproducibility, turn expansion off and write the expanded prompt yourself.

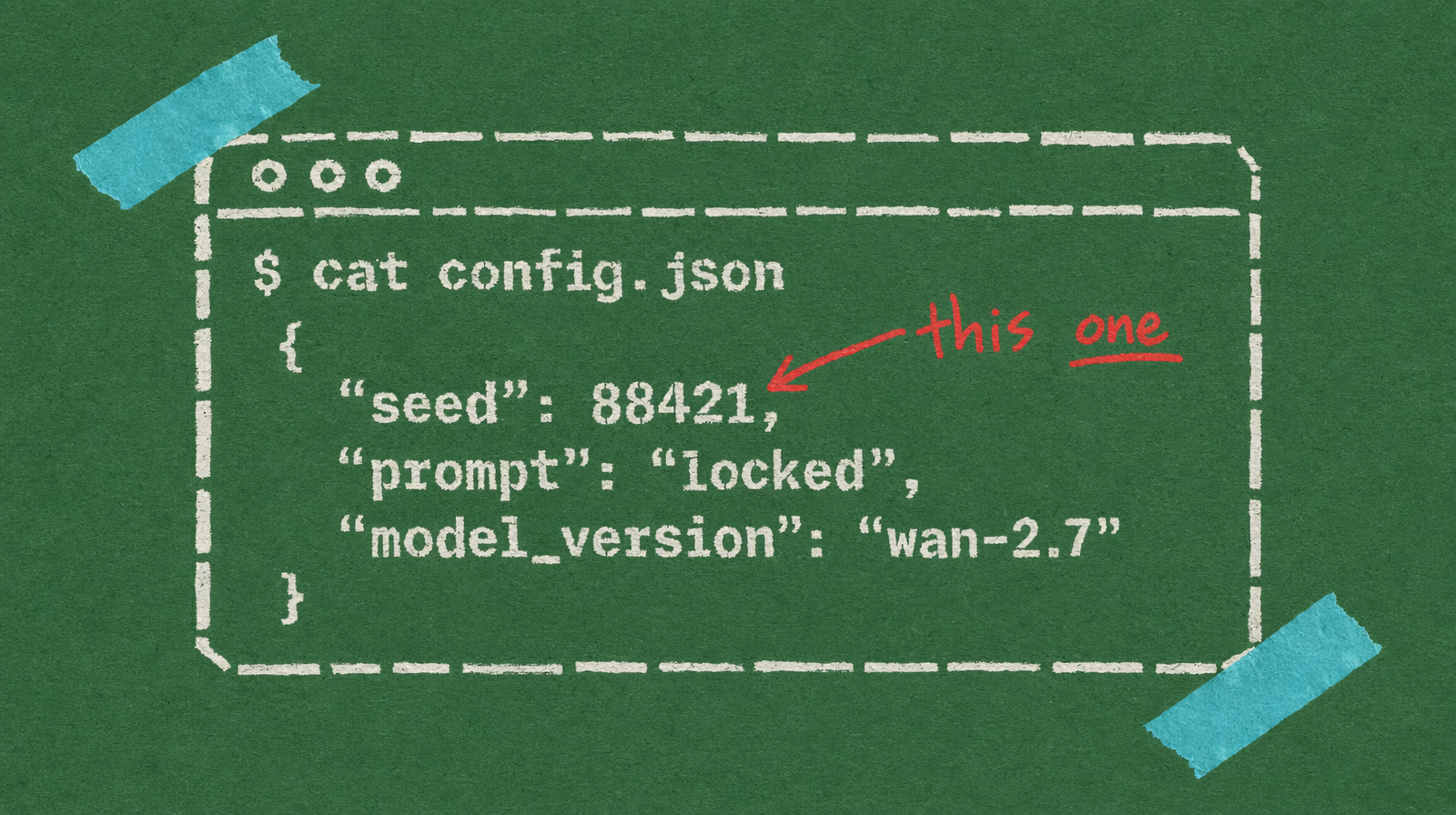

A reproducible call

1import fal_client23result = fal_client.subscribe(4 "fal-ai/wan/v2.7/text-to-video",5 arguments={6 "prompt": "slow dolly-in on a red kettle on a wooden stove, steam rising, soft window light, shallow depth of field",7 "negative_prompt": "blurry, low quality, flicker, warped",8 "seed": 88421,9 "aspect_ratio": "16:9",10 "resolution": "1080p",11 "duration": 5,12 "enable_prompt_expansion": False,13 },14)15print(result["seed"], result["video"]["url"])

The model always returns the seed it used back in the response. Log both the seed and the actual_prompt field. That is your audit trail.

What drifts anyway

Even with all of the above nailed down, outputs drift slightly across model version bumps on the endpoint. fal publishes model updates with new weights. The endpoint string stays fal-ai/wan/v2.7/text-to-video but the underlying checkpoint can change. If your business needs frame-identical reproducibility across months, snapshot the MP4 file and store it. Do not re-render old seeds six months later and expect the same clip.

Seedance 2.0 is honest about this. Its docs note that results may vary slightly even with the same seed. That is not a bug, it is the nature of a model that has non-deterministic kernels under the hood.

When reproducibility matters, when it does not

If you are doing A/B copy tests, seed discipline matters. You want to change one variable at a time.

If you are doing creative exploration, seeds matter less than you think. A fresh seed per attempt teaches you what the model can do. Save the good seeds, discard the rest.

If you are delivering client work, store the final MP4, not the recipe. Seed, prompt, and params are documentation. The file is the product.

At around $0.10 per second on Wan 2.7, a five second clip is $0.50. Running three seeds to pick one costs $1.50. That is cheap insurance against a single unlucky draw.