Review Workflows: Cutting the Reject Pile From Day One

How to structure creative review so the generation budget goes toward improvements, not re-reviews.

Every extra review round is a generation budget not spent on improvement. If your team spends Thursday looking at clips that were already rejected on Tuesday, you are not reviewing, you are circulating. The structure below cuts that loop hard.

The rule

One decision per clip per review. If a reviewer cannot decide, the clip goes to a named next step, not back into the pile.

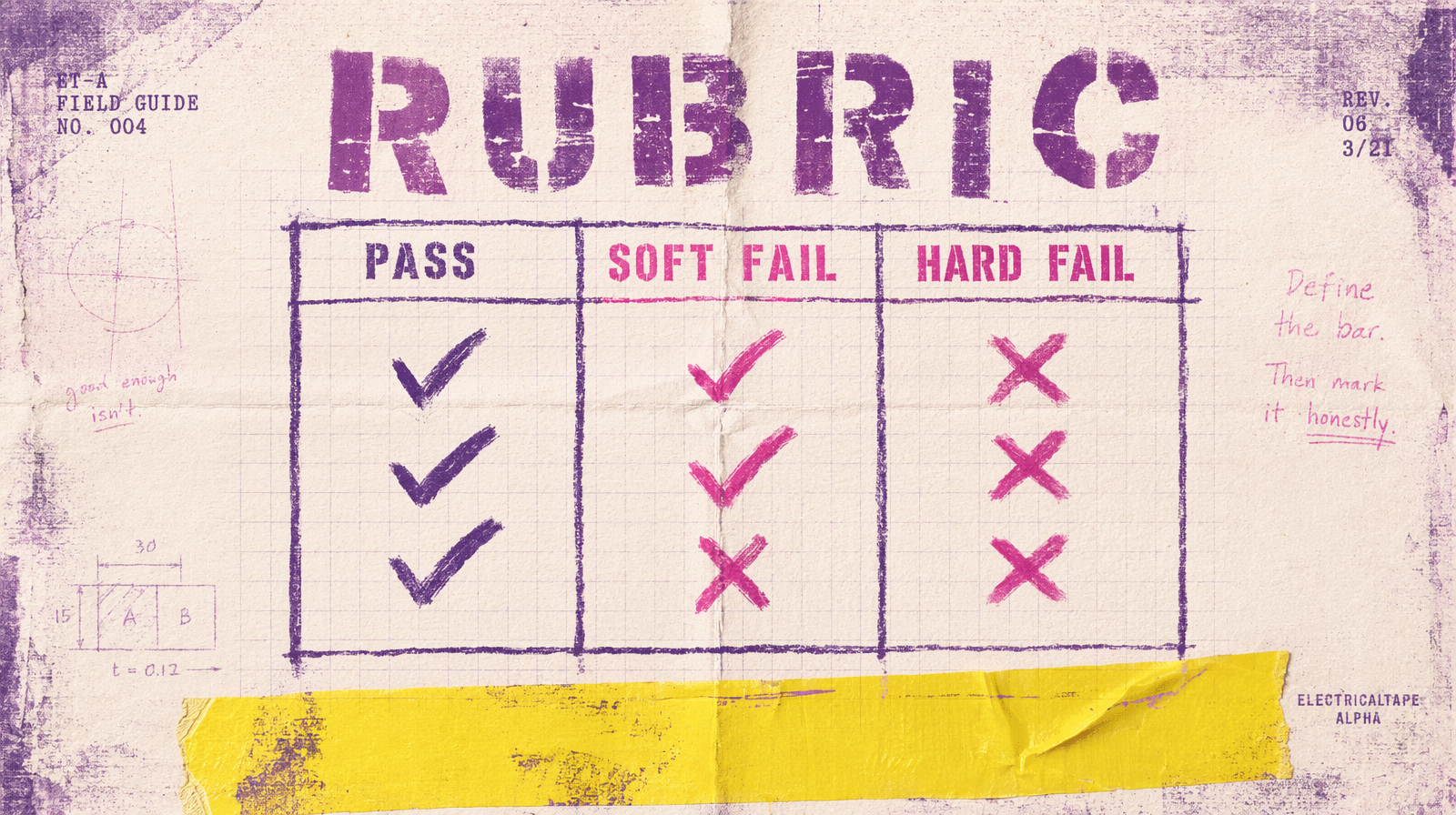

Three outcomes, not two

Pass, soft fail, hard fail. A soft fail is "close, but not quite," and it comes with a named action, which is usually "re-prompt with these three changes." A hard fail is "this approach is wrong," and it comes with a specific reason. "Meh" is not an outcome. If a reviewer writes "meh," kick it back with a request for a named action.

The batch size

Six to twelve clips per review. Smaller than six and reviewers lose context. Larger than twelve and they lose attention. Two reviews a day beats one long review.

The rubric

Three columns, never more. The columns are: hook strength, visual fidelity, brand fit. Each scored pass or fail. A clip that fails two of three is a hard fail. A clip that fails one is a soft fail. A clip that passes all three ships. Everyone uses the same rubric every time.

What to capture

Per clip, you record: the request_id, the rubric scores, the named action if any, the reviewer, the timestamp. You do not capture freeform notes unless the clip is a hard fail, because freeform notes are a signal that the rubric is wrong.

1await db.reviews.insert({2 request_id,3 reviewer: user.email,4 hook: "pass",5 fidelity: "pass",6 brand: "fail",7 outcome: "soft_fail",8 action: "swap outdoor scene for indoor, keep pacing",9 created_at: new Date()10});

How to get rid of the reject pile

Review pieces the day they are generated. A reject pile grows when review lags generation. The fastest way to reduce it is to slow generation until review catches up, not to run a cleanup sprint.

The re-prompt loop

A soft fail becomes a new prompt, a new generation, a new review. You do not re-review the original clip. If the new generation is a hard fail, step back and ask if the approach is right, not if the prompt is right.

Who reviews

Two reviewers for anything that ships, one for anything that goes into a draft queue. Reviewer rotations every two weeks keep calibration from drifting. When your rubric and your reviewers are stable, your reject pile gets small on its own.

The cost of not doing this

A pile of 50 unreviewed clips at $0.60 each is $30 of frozen spend, plus the cost of the time you will burn later sorting them. Multiply by ten pipelines and the number is not abstract. Every clip reviewed the day it was generated is a clip you do not have to regenerate because you forgot what the original prompt was trying to do.