Debugging IN_PROGRESS Forever Jobs

When a fal job never completes, five checks you run before you cancel and resubmit. Cancel is always last.

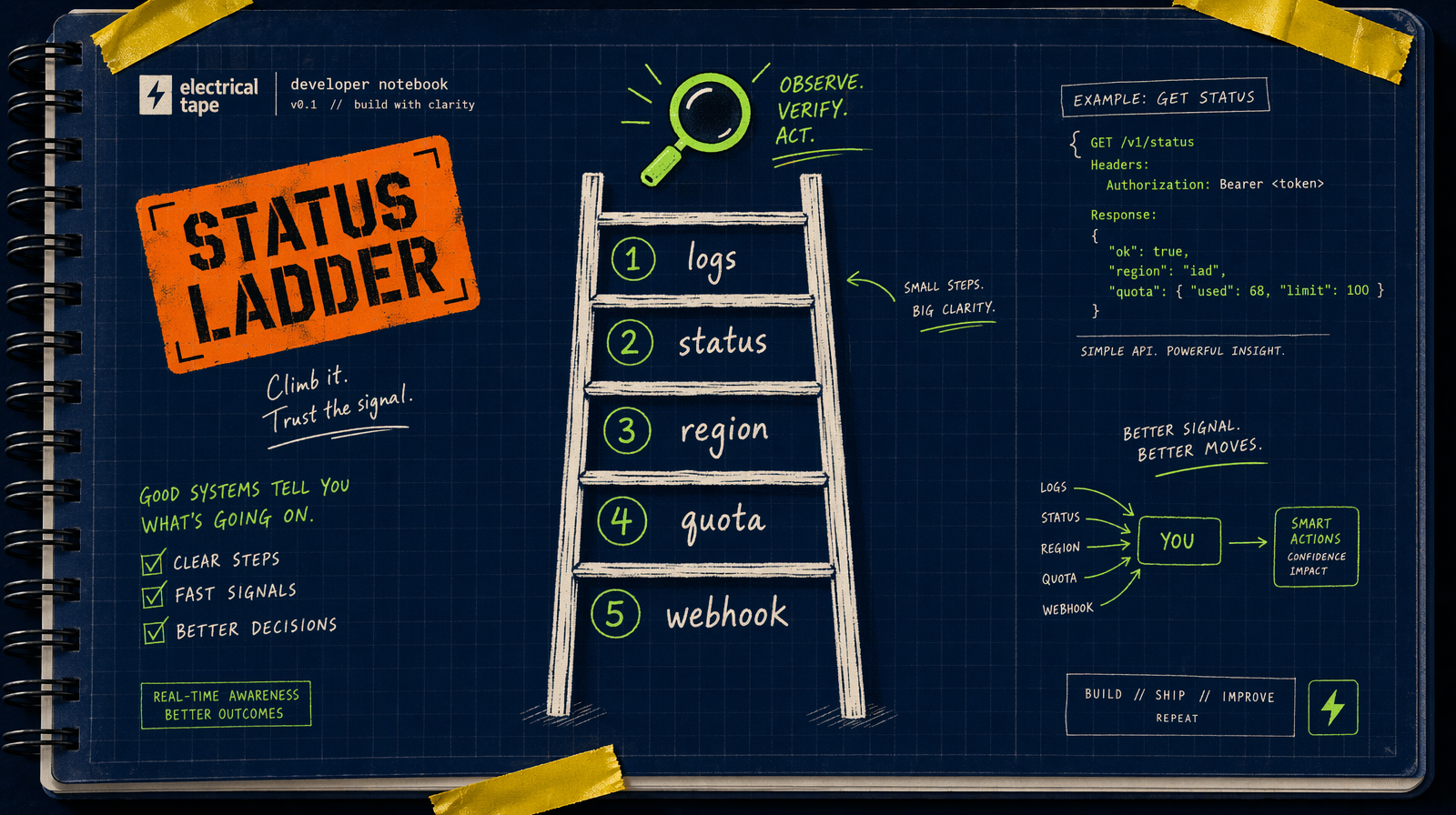

IN_PROGRESS does not mean broken

A job sitting at IN_PROGRESS longer than you expect is not automatically stuck. Before you cancel and resubmit, run five checks. Cancel is last.

Check one: logs at the endpoint

fal.queue.status with logs: true returns whatever the worker has printed. Ninety percent of "stuck" jobs tell you exactly what they are waiting on.

1const status = await fal.queue.status("fal-ai/veo3.1", { requestId, logs: true });23console.log(status.status);4status.logs?.forEach((l) => console.log(l.timestamp, l.message));

Recent timestamps mean it is alive. Last log 30 seconds old at 90 percent? Wait. Last log five minutes old at 10 percent? Continue.

Check two: the endpoint status

A single slow endpoint during a burst is common for Veo 3.1 4K, Kling v3 Pro, Wan 2.7 at 15 seconds. 45 second, one minute queue times on those during peak are not a bug.

If the community is quiet and the endpoint looks healthy, the issue is your payload. Continue.

Check three: the input payload

Two classes of silent-ish failure.

- Oversized inputs: image-to-video with a 12 MB reference, or audio with a 20 MB WAV. The worker is downloading your asset. Slow storage = long IN_PROGRESS.

- Ambiguous prompts: extremely long prompts or content that triggers Veo 3.1's

auto_fix=truerewrites. Result finishes, but slower.

Look for log messages like "downloading image_url" or "prompt expanded".

Check four: region and account concurrency

If you just fired 30 jobs and this is the 31st, you are queued behind a hidden worker limit. Status says IN_QUEUE or IN_PROGRESS but nothing is actively computing.

Count your own in-flight jobs:

1SELECT count(*) FROM generations WHERE status IN ('IN_QUEUE','IN_PROGRESS');

Near your ceiling? Obvious answer. Or submit a tiny Pixverse v6 5 second draft (around $0.15 at 360p no audio). If that flies through, your infra is fine and the original is just waiting.

Check five: the webhook

If you submitted with webhookUrl and your DB still says IN_QUEUE, the job may have completed and you missed the notification. Two ways that breaks.

- Handler returned non 2xx. Fal retries, then stops. Upstream COMPLETED, your DB never got the update.

- Webhook URL changed during deploy. Delivery fires to a 404.

Poll directly.

1const final = await fal.queue.result("fal-ai/veo3.1", { requestId });

If this succeeds, the job is done. Fix the webhook, update your DB with the result, move on. No re-generation.

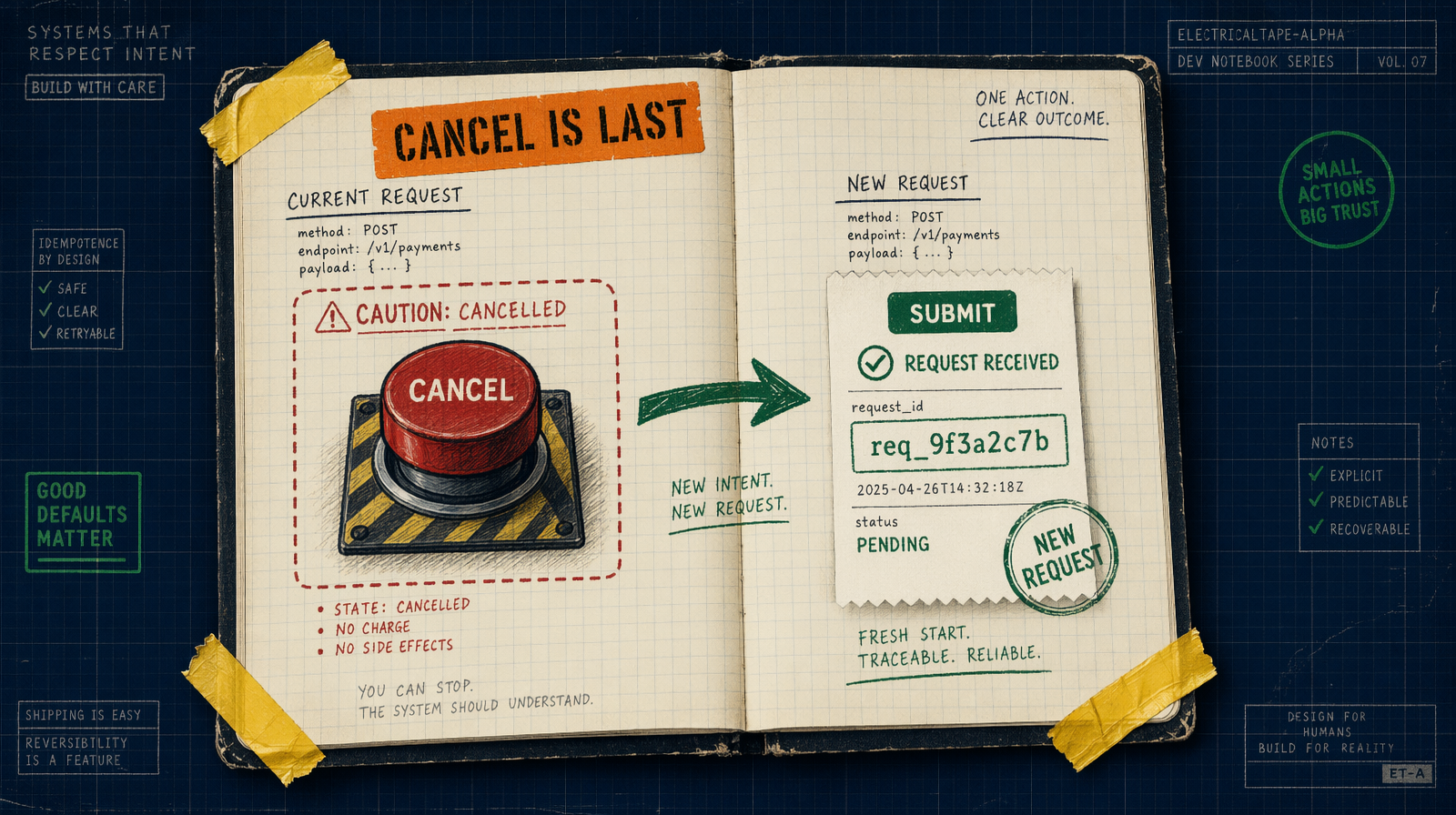

Now, and only now, cancel

If logs are stale, endpoint is healthy, payload is sane, concurrency is not it, no webhook pending, then cancel.

1await fal.queue.cancel("fal-ai/veo3.1", { requestId });2await db.query("UPDATE generations SET status='CANCELLED' WHERE request_id=$1", [requestId]);

Resubmit with a different strategy:

- Veo 3.1 at 4K: draft first on Veo 3.1 Lite at $0.05/sec.

- Wan 2.7 at 15s: split into two 8 second clips.

- Image-to-video with a large asset: upload to fal.storage first.

A debug helper you will reuse

1async function debugJob(endpoint: string, requestId: string) {2 const s = await fal.queue.status(endpoint, { requestId, logs: true });3 return {4 status: s.status,5 lastLogTime: s.logs?.at(-1)?.timestamp,6 lastLogMsg: s.logs?.at(-1)?.message,7 inFlight: await db.oneOrNone(8 "SELECT count(*)::int AS c FROM generations WHERE status IN ('IN_QUEUE','IN_PROGRESS')",9 ),10 };11}

Four data points. Most of the time, one obvious cause.