Persisting Generation History in Postgres

A schema for fal generation metadata with indexes that stay fast at 100k rows. Ships with SQL.

The one table you need

You do not need a star schema. You need one table with enough metadata to answer the five questions that come up weekly:

- How much did we spend this week, by model?

- Which user's job is stuck?

- How often does endpoint X fail?

- What was the exact prompt for this render?

- Can I replay last Tuesday's failed jobs?

Here is the minimum that answers all of them.

The schema

1CREATE TABLE generations (2 id BIGSERIAL PRIMARY KEY,3 request_id TEXT UNIQUE NOT NULL,4 user_id TEXT NOT NULL,5 endpoint TEXT NOT NULL,6 status TEXT NOT NULL CHECK (status IN7 ('IN_QUEUE','IN_PROGRESS','COMPLETED','FAILED','CANCELLED')),8 inputs JSONB NOT NULL,9 output_url TEXT,10 seed BIGINT,11 duration_s REAL,12 cost_cents INTEGER,13 error TEXT,14 created_at TIMESTAMPTZ NOT NULL DEFAULT now(),15 finished_at TIMESTAMPTZ16);

Eleven columns. inputs holds the whole payload as JSONB so you can query without a second table.

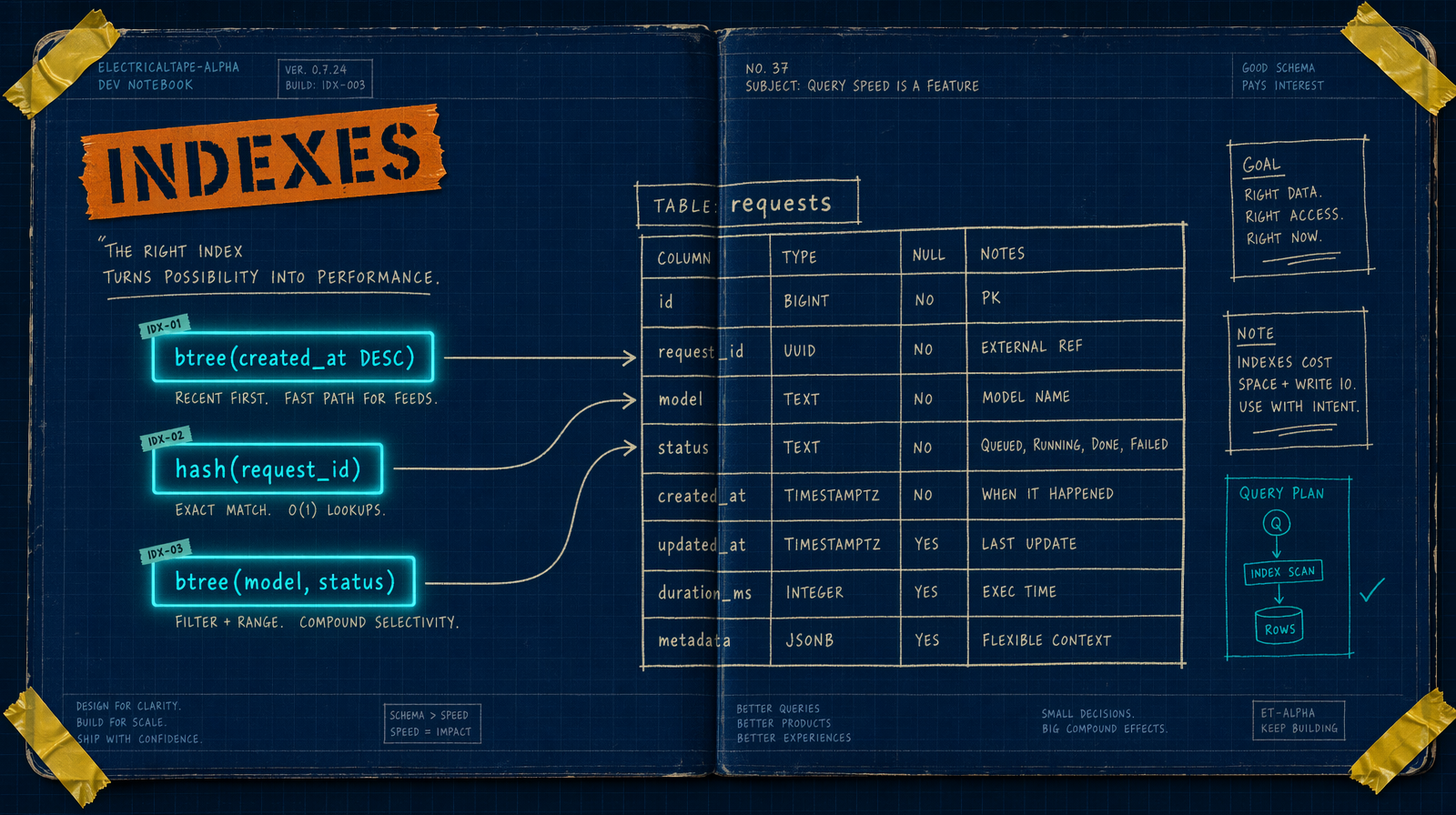

The indexes that keep you fast at 100k rows

1CREATE INDEX generations_user_created_idx2 ON generations (user_id, created_at DESC);34CREATE UNIQUE INDEX generations_request_idx ON generations (request_id);56CREATE INDEX generations_endpoint_status_idx7 ON generations (endpoint, status);89CREATE INDEX generations_inputs_gin ON generations USING GIN (inputs);

Four indexes. First three handle OLTP queries. The GIN index lets you run WHERE inputs @> '{"resolution":"4k"}' without a sequential scan.

Writing the row at submit time

Every job writes a row before the network call returns. That row is your recovery handle.

1const estimated = Math.round(durationSec * pricePerSec * 100);23const { request_id } = await fal.queue.submit(endpoint, {4 input,5 webhookUrl: `${process.env.APP_URL}/api/fal/webhook`,6});78await db.query(9 `INSERT INTO generations (request_id, user_id, endpoint, status, inputs, duration_s, cost_cents)10 VALUES ($1,$2,$3,'IN_QUEUE',$4,$5,$6)`,11 [request_id, userId, endpoint, JSON.stringify(input), durationSec, estimated],12);

cost_cents is an estimate at submit time. For Wan 2.7 ($0.10/sec) and Veo 3.1 ($0.40/sec) price is deterministic. For Seedance 2.0 ($0.014 per unit), reconcile on completion.

Updating on webhook

1UPDATE generations2SET status = $1,3 output_url = $2,4 seed = $3,5 finished_at = now()6WHERE request_id = $47 AND status IN ('IN_QUEUE','IN_PROGRESS');

The AND status IN (...) clause is replay safety. A duplicate webhook updates zero rows and the handler still returns 200.

Queries you will write in week one

1-- spend this week by model2SELECT endpoint, count(*) AS jobs, sum(cost_cents)/100.0 AS dollars3FROM generations4WHERE created_at > now() - interval '7 days' AND status = 'COMPLETED'5GROUP BY endpoint ORDER BY dollars DESC;67-- failure rate per endpoint8SELECT endpoint,9 count(*) FILTER (WHERE status='FAILED')::float / NULLIF(count(*),0) AS fail_rate10FROM generations11WHERE created_at > now() - interval '7 days'12GROUP BY endpoint HAVING count(*) > 20;1314-- 4K Veo 3.1 renders this week15SELECT request_id, user_id, cost_cents/100.0 AS dollars16FROM generations17WHERE endpoint='fal-ai/veo3.1' AND inputs @> '{"resolution":"4k"}'18 AND created_at > now() - interval '7 days';1920-- stuck jobs21SELECT request_id, endpoint, created_at FROM generations22WHERE status IN ('IN_QUEUE','IN_PROGRESS')23 AND created_at < now() - interval '30 minutes' LIMIT 50;

Retention

At 5000 renders/day that is 1.8M rows/year. Tiny for Postgres with these indexes. At 10M rows, partition by month or split inputs into a sibling table keyed on request_id. Do not do either before you have to.

What not to do

Do not store video bytes in Postgres; URL only. Do not put prompt as a top level column alongside inputs; two sources of truth drift. Do not skip the unique index on request_id; it is your webhook idempotency. Do not use a boolean is_completed; you need FAILED and CANCELLED later.

Four indexes, one JSONB, one status enum. Survives the first million rows without a migration.